Browse Abandonment Email Optimization: The Testing and Analytics Framework That Turns a Good Flow Into a Great One

Your browse abandonment flow isn't done when it's live. The metrics, A/B testing framework, and quarterly audit cadence that make good flows compound into great ones.

Setting up a browse abandonment flow is the beginning, not the end. Here's the complete framework for measuring what matters, testing what moves the needle, and building a program that compounds over time.

Most browse abandonment flows are built once.

A team member sets up the trigger, configures the dynamic product block, writes two emails, connects the SMS path, and activates the flow. It runs. Revenue comes in. The flow joins the list of "things that are working" and the team moves on to the next project.

Six months later, the flow is still running. Nobody has looked at it. The conversion rate has drifted down by 30 percent — partly because a product catalog update broke one of the dynamic image URLs, partly because the copy was written for an audience that has since shifted, partly because a competitor started sending better browse abandonment emails and the contrast sharpened the weakness of yours. Nobody noticed because nobody was looking.

This is the gap that separates email programs that consistently perform from ones that peaked at setup. Not the quality of the initial build. Not the sophistication of the segmentation architecture. Not the copy or the design or the discount decision.

The gap is whether anyone is paying attention after the flow goes live.

Chase Dimond has said it plainly and repeatedly across his courses, podcasts, and published work: automated flows are not set-it-and-forget-it. They run automatically. They do not optimize automatically. The optimization — the relentless, data-driven, incrementally compounding work of making the flow better over time — requires human attention on a deliberate cadence.

This final chapter of the series is about that work. How to measure browse abandonment performance correctly. How to build an A/B testing architecture that produces real signal. How to attribute revenue honestly. And how to build the quarterly audit habit that separates email programs generating 20 to 30 percent of brand revenue from those stuck at 5.

The Metrics That Matter: Building the Right Dashboard

The first step in browse abandonment optimization is measuring the right things. This sounds obvious and is widely ignored. Most brands track the metrics their email platform surfaces by default — open rate, click rate, revenue — without asking whether those metrics are accurately measuring what they actually care about.

They often aren't. Here is the full metrics hierarchy for browse abandonment, organized from most to least important.

Primary KPI: Revenue Per Recipient (RPR)

RPR is total flow revenue divided by the number of emails delivered. It is the single number that captures what you ultimately care about: how much revenue does each email sent actually generate?

RPR accounts for both conversion rate and average order value simultaneously. A flow with a high conversion rate on low-AOV orders can have a lower RPR than a flow with a modest conversion rate on high-AOV orders. Open rate, click rate, and even raw conversion rate can all look healthy while RPR is declining — if the emails are being opened and clicked but not converting to meaningful order values, or if the audience skewing toward lower-AOV product categories.

Thomas Lalas, author of Retention Economics and a Fractional Director of Retention for eight-to-nine figure CPG brands, uses RPR as the primary metric for every flow he manages. His reasoning is direct: RPR is the only metric that directly connects email activity to business outcome. Everything else is a proxy.

Calculate RPR weekly. Track it over time. When it declines, the decline is a diagnostic signal — something changed, either in the flow, the audience, or the catalog — and it is your cue to investigate. When it improves, the improvement validates a test or confirms that an optimization worked.

Secondary KPI: Conversion Rate (True)

True conversion rate for browse abandonment is: orders generated by the flow divided by emails delivered. Not clicks. Not opens. Orders.

This is distinct from Klaviyo's default conversion attribution, which attributes revenue to an email if a subscriber places any order within a defined window after receiving the email — regardless of whether they clicked it. We'll address attribution in detail later in this chapter.

Track conversion rate separately by segment path. The overall flow conversion rate is a blended number that conceals meaningful variation. First-time browsers convert at a different rate than loyal buyers. High-intent browsers convert at a higher rate than single-view casual browsers. Seeing only the blended number prevents you from identifying which segment is underperforming and why.

Secondary KPI: Click-to-Open Rate (CTOR)

CTOR — clicks divided by opens — is the primary measure of email content effectiveness independent of subject line performance. It answers a specific question: of the subscribers who opened the email, what proportion found the content compelling enough to click?

A low CTOR against a high open rate tells you the subject line is doing its job but the email body is not. The subscriber opened, found the content disappointing or unclear, and closed without clicking. This is a copy and design problem, not a subject line problem.

A high CTOR against a low open rate tells you the email body is strong but the subject line is losing subscribers before they get to it. This is a subject line problem.

Separating these two performance problems — which a raw click rate cannot do — is why CTOR belongs in your standard browse abandonment reporting.

Watch Metric: Unsubscribe Rate

The unsubscribe rate for browse abandonment emails should be lower than your campaign send average — because browse abandonment emails are behaviorally triggered and therefore more relevant than batch sends. If your browse abandonment unsubscribe rate is at or above your campaign average, something is wrong: the flow is sending too frequently, the tone is off, or the emails are reaching the wrong audience segments.

Investigate any unsubscribe rate above 0.3 percent on browse abandonment emails. The national average for triggered emails is lower than for campaigns. If your browse flow is generating campaign-level unsubscribes, it is behaving like a campaign — undifferentiated, high-frequency, low-relevance — rather than like a behavioral follow-up.

Watch Metric: Spam Complaint Rate

Zero tolerance is the right standard for spam complaints in any email flow. Klaviyo will pause your account if your complaint rate exceeds 0.08 percent — and Gmail and Yahoo have implemented stricter sender policies that make even lower thresholds operationally dangerous.

For browse abandonment specifically, spam complaints spike when the re-entry throttle is not set correctly, when unengaged subscribers are included in the audience, or when the browsed product data is misconfigured and the email arrives showing the wrong product or no product. All three of these are technical problems with operational fixes. A rising spam complaint rate in your browse flow is always a sign to stop, diagnose, and fix before the flow continues sending.

Contextual Metric: Open Rate (Post-MPP)

Open rate in 2026 is a contextual metric, not a performance metric.

Apple Mail Privacy Protection, introduced in iOS 15, pre-loads email images for all Apple Mail users regardless of whether they open the email — registering a phantom open in every email platform's reporting. Depending on your audience composition, Apple Mail users may represent 40 to 60 percent of your list. For these subscribers, every email you send shows as opened whether or not it was.

The practical consequence: your reported open rate is significantly inflated and cannot be used as a reliable measure of actual opens or subscriber engagement.

Use open rate for directional trend analysis only. If open rate on your browse abandonment flow drops sharply over a short period, it may indicate a deliverability problem (emails going to spam, where they're not opened at all) or a subject line problem. But a stable open rate is not evidence of health, and a high open rate is not evidence of engagement.

Jeanne Jennings, founder of Email Optimization Shop and one of the most rigorous methodologists in the email industry, recommends shifting primary engagement measurement from open rate to click rate and CTOR for all flows in the post-MPP environment. The click is an unambiguous signal of engagement. The open is not.

Attribution: Giving Browse Abandonment Its Fair Share — But Not More

Attribution is where browse abandonment reporting gets philosophically complex and practically consequential.

Klaviyo's default attribution model assigns revenue to the most recent email a subscriber received within a defined conversion window — typically five days. This means if a subscriber receives a browse abandonment email on Monday and places an order on Friday, that order is attributed to the browse abandonment email regardless of what else happened in between — whether they also received a campaign email, clicked a paid social ad, saw a retargeting banner, or simply decided to buy on their own timeline.

This model tends to overstate browse abandonment's contribution to revenue. The subscriber who was going to buy anyway and happened to receive a browse abandonment email in the preceding five days registers as a browse abandonment conversion. The flow gets credit for revenue it did not create.

The inverse is also true. A subscriber who received the browse abandonment email, did not click it, but returned to the site two weeks later and purchased is not attributed to the browse flow — even if the email was the trigger that kept the product in their consideration set.

Neither direction is perfectly accurate. Attribution is always an approximation. The goal is an approximation that is useful for decision-making.

Practical Attribution Recommendations

Shorten the conversion window for browse abandonment. Klaviyo's default five-day window is calibrated for cart and checkout abandonment — flows where the purchase intent is high and the conversion window is genuinely short. Browse abandonment has lower intent and a longer typical consideration cycle. A three-day attribution window may be more accurate for lower-consideration purchases; a seven-day window may be appropriate for higher-ticket categories. Test different windows and compare the attributed revenue to your own sense of the flow's actual impact.

Use Klaviyo's multi-touch reporting where available. Klaviyo's advanced reporting features allow you to see email-assisted conversions — orders where a browse abandonment email was in the subscriber's path but not the last touch before purchase. This gives a more complete picture of the flow's contribution to the customer's decision, even when it didn't directly generate the final click.

Run a holdout test to measure true incrementality. The most rigorous way to measure browse abandonment's actual contribution is a holdout test: randomly suppress the flow for a defined percentage of eligible subscribers — typically 10 to 20 percent — over a defined period, and compare their conversion rate and revenue to the non-suppressed group.

The difference in conversion rate and revenue between the holdout group and the non-holdout group is the flow's true incremental contribution. Not the attributed revenue. Not the conversion rate. The incremental lift from actually sending the emails, measured against the counterfactual of not sending them.

Holdout testing is underused in DTC email marketing, primarily because it requires deliberately suppressing revenue in the short term to measure the flow's true value. Brands that run holdout tests on their browse abandonment flows consistently report that attributed revenue overstates true incremental contribution — sometimes significantly. The overstatement does not mean the flow isn't valuable. It means you're making decisions based on a number that is larger than reality, which affects everything from resource allocation to the discount calculation from Part 6.

If you can run one measurement improvement on your browse abandonment program this year, make it a holdout test.

The A/B Testing Architecture

A/B testing in browse abandonment is different from A/B testing campaigns in one critical way: the send volume is lower and the send cadence is continuous rather than episodic.

A campaign A/B test can reach its full audience in 24 to 48 hours and produce statistically significant results in days. A browse abandonment A/B test sends to subscribers as they qualify — one by one, as they trigger the flow — and may take weeks or months to accumulate sufficient sample size for reliable conclusions.

This changes how you design tests, how long you run them, and how you interpret results.

What to Test, In Priority Order

Not all test variables produce equal lift. Some decisions have much larger impact on RPR than others. Testing in priority order — starting with the highest-leverage variables — produces the fastest compounding improvement.

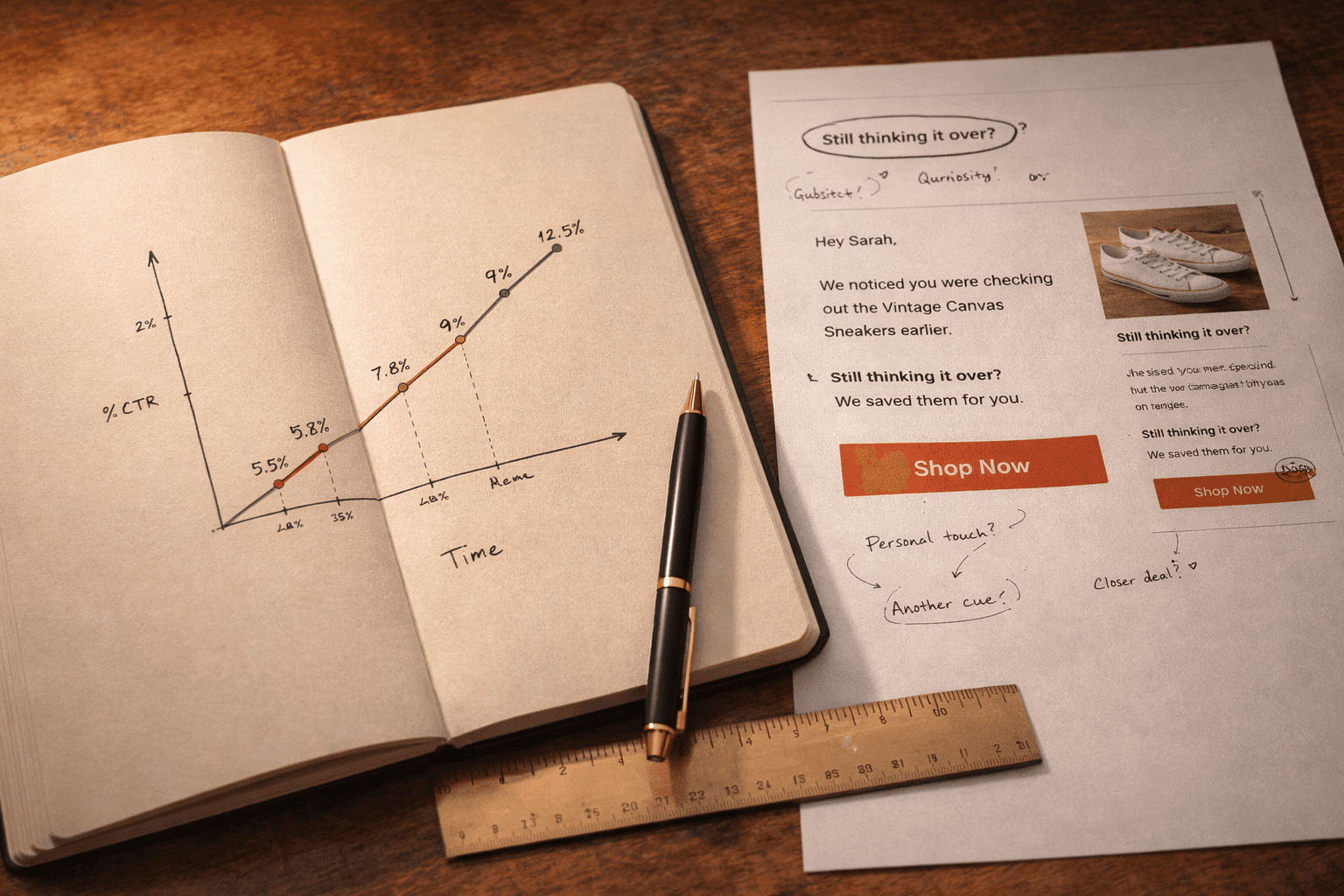

1. Send timing of Email 1 (highest leverage)

The delay between the browse session and the first email is one of the highest-impact variables in the flow and one of the easiest to test. A/B test 1–2 hours versus 3–4 hours, measuring RPR. Depending on your product category and audience, the difference in RPR can be substantial — sometimes in favor of the faster send, sometimes in favor of giving more space.

This test is high priority because it has flow-level impact: the timing affects every email in the sequence, not just one.

2. Email count (one versus two versus three emails)

The most important structural question in your browse abandonment sequence is whether adding an email adds RPR or simply adds sends. Test your current email count against a reduced sequence — if you're running three emails, test two. If you're running two, test one.

The metric to watch: total flow RPR, not individual email RPR. An additional email will almost always generate some incremental revenue. The question is whether the incremental revenue from the additional send exceeds its cost in list fatigue, unsubscribes, and the dilution of the signal that an earlier email represents.

Many brands find that a two-email sequence produces 85 to 90 percent of the RPR of a three-email sequence, with significantly lower unsubscribe rates. That trade-off is often the right one.

3. Subject line

Subject line testing is the most commonly run A/B test in email marketing and the most frequently misinterpreted. The common mistake: testing subject lines against open rate, calling the winner, and moving on.

Open rate is the wrong metric for subject line testing in the post-MPP environment. Test subject lines against CTOR or RPR. A subject line that generates more opens but fewer qualified clicks — because it attracts curious but unconvinced subscribers — produces a lower RPR than a subject line that generates fewer opens but attracts subscribers with higher purchase intent. The latter is the better subject line for conversion purposes, even if it loses on raw open rate.

Run Jay Schwedelson's highest-impact tactics as test variants: short subject line with blank preheader against a standard-length subject line with preheader text. First-person CTA framing against third-person. "Made for you" against product-named subject lines. Test one variable at a time.

4. Discount versus no discount for the first-time buyer segment

If you're uncertain whether to offer an incentive in your browse abandonment flow, this is the test that resolves it — not a philosophical argument, but your own data. A/B test Email 3 in your first-time buyer path: one variant with a discount code, one without. Measure at 90-day LTV, not conversion rate. The result answers the discount question for your specific brand and audience more reliably than any industry benchmark.

5. Plain text versus HTML for Email 2 or Email 3

As Part 4 and Part 5 both discussed, plain text emails perform surprisingly well as later emails in browse abandonment sequences — particularly as the final email in a high-intent browser path. Test a plain text version of Email 2 or Email 3 against your current HTML version. The lift potential is significant for the right brand and the right segment, and the test is inexpensive to run.

6. CTA copy

First-person CTA copy versus third-person. Specific product-named CTAs versus generic. "Show me [product name]" versus "View [product name]" versus "Take another look." These tests are low-setup and produce reliable signal quickly because they directly measure the click decision.

Test Design Standards

One variable per test. Multi-variable tests produce uninterpretable results when sample sizes are below several thousand conversions per variant. Browse abandonment flows rarely accumulate sample sizes large enough to support multi-variable testing. Test one thing. Wait for the result. Then test the next thing.

Statistical significance before calling a winner. The minimum threshold for calling a test result is 95 percent confidence — meaning there is less than a 5 percent probability that the observed difference between variants is due to chance. Klaviyo's built-in A/B testing displays significance scores. Do not call a winner before reaching 95 percent confidence, regardless of how promising the early results look.

Jeanne Jennings's optimization methodology is emphatic on this point: early test results are systematically misleading. The variant that looks like a clear winner in the first week often converges with the control by week three. Wait for statistical significance. The patience required is the discipline that separates rigorous testing from intuition dressed up as data.

Minimum sample size planning. Before running a test, calculate the minimum sample size required to detect a meaningful difference. For browse abandonment conversion rate tests, a reasonable assumption is that you need at least 500 conversions per variant to detect a 20 percent relative improvement with 95 percent confidence. If your browse flow processes 2,000 eligible subscribers per month with a 2 percent conversion rate, each variant accumulates 20 conversions per month — meaning the test will take 25 months to reach significance.

This is not a reason to avoid testing. It is a reason to prioritize your test queue carefully, test high-leverage variables first, and accept that some tests will require multi-month patience. It is also a reason to invest in list growth — a larger eligible audience means faster test cycles and more iterations per year.

Document everything. Every test should be logged: the hypothesis, the variant design, the start date, the end date, the result, and the decision made. Build a test log in whatever format your team will actually maintain — a shared spreadsheet, a Notion document, a Klaviyo note. The log serves two purposes: it prevents re-running tests you've already run, and it builds institutional knowledge that survives team member turnover.

The Quarterly Audit: What to Check, When, and Why

Beyond A/B testing, browse abandonment optimization requires a systematic audit cadence — a scheduled review of the flow's technical integrity, audience health, and performance trends that catches the silent drift most brands don't notice until it has already cost meaningful revenue.

Set a quarterly audit as a recurring calendar event. It takes two to three hours. Here is the checklist.

Technical Integrity Check

Dynamic product block rendering. Go into Klaviyo's preview tool and pull the last 10 to 20 Viewed Product events from your activity feed. Render each email against a real event. Verify that the product image, name, price, and URL are all populating correctly. A product that was updated, renamed, discontinued, or whose image URL changed will cause the dynamic block to render incorrectly — showing a broken image, the wrong product name, or a dead link. This happens silently. The email sends. The block renders incorrectly. The subscriber sees a broken email. Find it before it sends to 5,000 people.

Flow filter integrity. Verify that all four flow filters — Added to Cart, Started Checkout, Placed Order, and the re-entry throttle — are still active and correctly configured. Klaviyo periodically updates its metric names and filter interface. A filter that was correctly set up six months ago may reference a renamed metric that no longer matches the active event. Spot-check that subscribers who placed orders are not receiving browse abandonment emails.

Smart Sending status. Confirm Smart Sending is still active on all emails in the flow. It can be inadvertently turned off during template edits.

Unsubscribe link presence. Confirm every email in the flow has a functioning, one-click unsubscribe link. This is legally required in most jurisdictions and operationally critical for deliverability.

Mobile rendering. Re-test the flow emails on three current phone screen sizes. Phone screen sizes and email client rendering behavior change. An email that rendered correctly at launch may have rendering issues on newer devices.

Audience Health Check

Engagement composition. Pull the segment of subscribers who have entered the browse abandonment flow in the last 90 days. What proportion of them are "engaged" — have opened or clicked an email in the last 90 to 180 days? If the engaged proportion is declining, your flow may be increasingly sending to unengaged contacts. Tighten the engagement filter.

Segment path distribution. How many subscribers are entering each of the four segment paths — first-time buyer, one-time buyer, loyal buyer, high-intent browser? A large shift in path distribution may indicate a change in your audience acquisition mix that should inform copy or offer adjustments.

Unsubscribe and complaint rate trends. Review the last three months of unsubscribe and spam complaint rates for browse abandonment emails specifically. A rising trend in either is an early warning sign that the flow is reaching the wrong audience, sending too frequently, or producing copy that is no longer resonating.

Performance Trend Review

RPR trend over 90 days. Plot monthly RPR for the browse abandonment flow over the last three months. Is it stable, trending up, or trending down? A declining RPR trend should trigger investigation. Common causes: dynamic block integrity issues, audience composition shift toward lower-intent or lower-AOV segments, copy that has aged and lost resonance, or competitive improvement that has made your emails look weaker by contrast.

Conversion rate by segment path. Compare conversion rates across your four segment paths. Is one path significantly underperforming relative to its historical average? A sharp decline in first-time buyer conversion rate may indicate a new acquisition channel is bringing in lower-intent subscribers who are less likely to convert from a browse flow. A decline in loyal buyer conversion rate may indicate the single email in that path needs a refresh.

Test results review. Review any A/B tests that concluded since the last audit. Implement winning variants. Close out inconclusive tests. Launch the next test from your priority queue.

Copy and Offer Refresh

Every six months — two audit cycles — conduct a copy review beyond performance metrics. Read each email in the flow from the perspective of a first-time subscriber receiving it today.

Does it still sound human and current? Or does it sound like it was written 18 months ago, for an audience that no longer perfectly matches your current subscribers?

Does it reflect your current brand voice? Brands evolve. A browse abandonment email written in the early days of a brand may have a tone that no longer fits the maturity and positioning of the brand today.

Does it address the current primary objection for that product category? VOC data changes. Customer concerns shift. What your product reviews said 18 months ago about the primary hesitation before buying may not match what they say now. Pull fresh reviews. Re-mine them. Update the objection-handling copy in Email 2 accordingly.

Austin Brawner, whose CXL Institute course on lifecycle email marketing has trained thousands of ecommerce operators, frames this as the distinction between a marketing program and a marketing system. A program runs until someone turns it off. A system is maintained, updated, and continuously improved. Browse abandonment — like every automated flow — should be a system.

The Compounding Logic: Why Incremental Improvements Matter More Than They Appear

The case for taking optimization seriously — for running the quarterly audit, for building the testing infrastructure, for measuring RPR instead of open rate — rests on a mathematical reality that is easy to state and easy to underestimate.

Compounding.

A browse abandonment flow that processes 5,000 eligible subscribers per month at a 2 percent conversion rate and $80 AOV generates $8,000 in monthly revenue. Annual revenue: $96,000.

Apply a series of incremental optimizations over 12 months — better send timing improves conversion rate by 10 percent, a refined subject line improves CTOR by 15 percent, a plain text Email 3 improves Email 3 conversion rate by 20 percent, a tightened audience filter improves overall conversion rate by 5 percent — and the compounding effect of those improvements produces a flow converting at roughly 2.8 percent, generating $11,200 per month.

Annual revenue: $134,400. An increase of $38,400 from incremental, patient, compounding optimization. No additional ad spend. No new subscriber acquisition. No fundamental restructuring of the program.

This is what Eli Weiss means when he says customer experience improvements compound. It is what Thomas Lalas means when he frames email optimization as a retention economics problem rather than a conversion rate problem. Each incremental improvement is small. The accumulated effect over time is the difference between a flow that generates five figures annually and one that generates six.

The brands running email programs that account for 20 to 30 percent of total revenue are not doing it because they built better flows. They are doing it because they built the habit of optimization — the consistent, data-driven, patient practice of making each flow incrementally better over time — and they have been doing it long enough for the compounding to express itself.

Bringing the Full Series Together

Over the seven chapters of this guide, we have built a complete browse abandonment email program from the ground up.

We started with strategy and philosophy — the foundational clarity that browse abandonment is a conversation, not a campaign; that it cannot substitute for product quality; that every email should pass the hospitable host test before it sends.

We built the segmentation architecture — four distinct audiences, each requiring different messaging, different offer logic, and different email counts, with suppression rules that protect both deliverability and customer relationships.

We covered the technical setup — the trigger, the flow filters, the dynamic product block configuration, and the server-side tracking investment that expands the eligible audience.

We applied the copywriting frameworks — VOC research, story structure, psychological state mapping, and subject line tactics backed by six billion emails of data.

We established the design principles — visual hierarchy that serves conversion, mobile-first requirements that are not optional, and a template architecture that scales without creating technical debt.

We worked through the discount debate — the full argument from both sides, and a five-question framework for making the right decision for your specific brand.

And now we have the optimization infrastructure — the metrics that matter, the A/B testing architecture, the attribution decisions, and the quarterly audit cadence that keeps the program improving.

What remains is the practice. The consistent, patient, compounding application of this framework over time.

The browse abandonment flow you build today will not be the one generating peak revenue six months from now. It will be the one you built, measured, tested, audited, and improved — one variable at a time, one quarter at a time, with the discipline to let data override intuition and the patience to wait for statistical significance before declaring a winner.

That practice is the competitive advantage. Not the technology. Not the platform. Not the template.

The practice.

Series Summary: The Complete Browse Abandonment Framework

For quick reference, here is the full architecture this series has built:

Philosophy: Browse abandonment is a conversation, not a campaign. Every email should feel like a warm host, not a tracking pixel. It amplifies what works — it cannot fix what doesn't.

Segments: Four paths — first-time buyer, one-time buyer, loyal buyer, high-intent browser — each requiring distinct messaging, offer logic, and email count.

Technical foundation: Viewed Product trigger, four flow-level filters, Smart Sending on, re-entry throttle set, Static block type for dynamic product content, server-side tracking evaluated.

Copy system: Subject lines built on Schwedelson's data, body copy built on VOC research and psychological state mapping, story structure that teaches before it sells, voice that sounds human before it sounds automated.

Design standard: Single column, product-forward, mobile-first, one CTA, dark mode tested, accessibility-compliant.

Incentive decision: Segment-specific, never in Email 1, non-cash alternatives evaluated first, measured at 90-day LTV not conversion rate.

Optimization cadence: Weekly RPR tracking, A/B tests in priority order, quarterly technical and audience audits, copy refresh every six months.

This is the system. Build it deliberately. Maintain it consistently. Improve it incrementally.

That is what a browse abandonment program that compounds looks like in practice.

This post is Part 7 — the final chapter — of The Ultimate Guide to Browse Abandonment Emails, a multi-part series synthesizing the frameworks, tactics, and philosophies of 25 of the world's top retention email marketing experts, published on Geysera.com.

Read the complete series:

Part 1: Browse Abandonment Strategy & Philosophy — What It Is and Why It Matters

Part 2: Segmentation & Trigger Architecture — Who Gets This Email, When, and Why

Part 3: Technical Setup — How to Build the Flow in Klaviyo

Part 4: Copywriting — The Words That Turn Window Shoppers Into Buyers

Part 5: Design & UX — The Visual Experience That Gets Clicks

Part 6: Discount Debate - When to Offer an Incentive

Part 7: Testing, Analytics & Optimization — How to Improve What's Already Running (you are here)